Found in the Cosmos

AI, Language, and the Shape of Human Consciousness

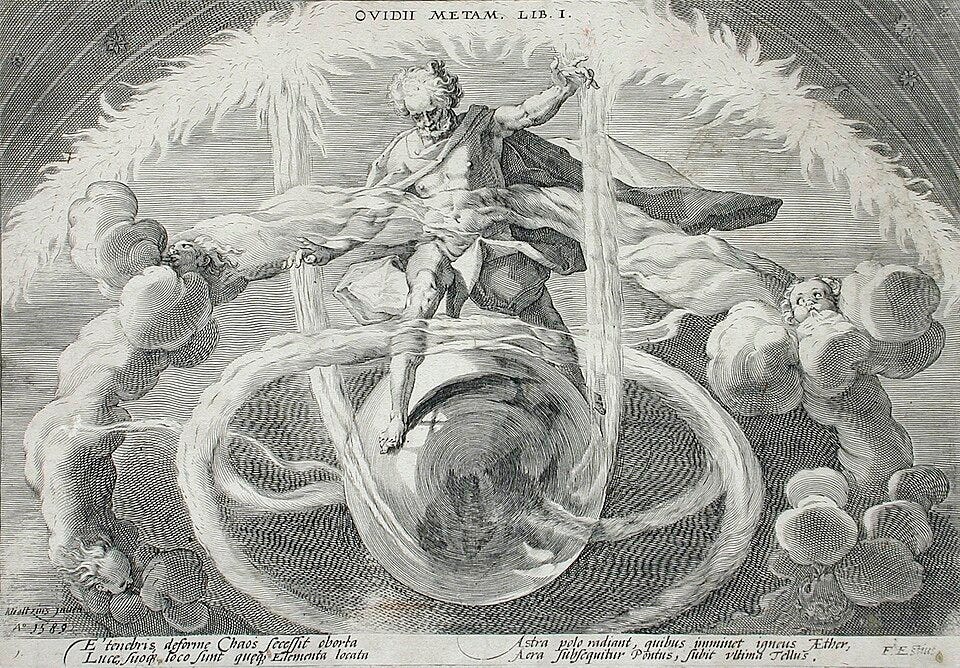

Speculation about the rise of superhuman machine intelligence has become a defining feature of our technological moment. Whether discussed as “artificial general intelligence,” the advent of a coming “singularity,” or more recently through debates about model welfare and the moral status of advanced AI systems, these narratives imagine a future in which machines replace humans across every domain of life, leaving us to confront our possible displacement from any privileged position in the cosmos.

Fascinated by the pursuit of what might one day replace us, humans have spent decades putting our machines to the test. In his 1950 essay “Computing Machinery and Intelligence,” Alan Turing posed the question “can machines think?”1 Recognizing the difficulty of this task without an agreed upon definition of what it means to think, Turing proposed the “imitation game,” a set of rules and procedures to compare the machine with the only entity of which we can be sure actually thinks: the human brain.

For decades, the thinking machine envisioned by Turing seemed elusive. But in the last few years, the machines have flipped the imitation game on its head: we no longer differentiate machines from humans by a lack of general knowledge, but through a seeming abundance of it.

“We are no longer the undisputed masters of the infosphere,”2 says information philosopher Luciano Floridi, highlighting how even the word “computer” has changed over time from exclusively referring to “a person who performs calculations” to our modern understanding of programmable machines, gradually shifting the locus of intelligence away from the human mind. Floridi talks about this displacement as “the Fourth Revolution,” continuing the trend of the Copernican, Darwinian, and Freudian revolutions.

Modern AI systems have put humanity in the dock. We used to wonder whether computers could think, whether they had capacity for intelligence or consciousness. But AI is now holding a mirror up to humanity, forcing us to ask questions about the very nature of thought, knowledge, and mind.

If we look past these puzzles and games and peer into the source code behind AI, we will find insights into the foundational nature of language and its relationship to thought and consciousness. Martin Heidegger talked about language as “the house of being” and philosopher Charles Taylor elaborated on this, saying that “to learn the language of society is to take on some imaginary of how society works and acts, of its history through time; of its relation to what is outside: nature, or the cosmos, or the divine.”3

Language, and the technologies that mediate it, shape the contours of our engagement with the world around us. And while recent advancements in AI may seem to challenge the fundamental value of humanity, this quest for superhuman intelligence may instead lead us to rediscover ourselves.

A Tale of Two AIs

Ever since the introduction of the phrase “artificial intelligence” by John McCarthy in 19554, there have been multiple perspectives on the optimal path to constructing a thinking machine. At the risk of oversimplification, these efforts can be categorized into two different approaches, often referred to as “symbolic AI” and “connectionist AI.” Though both draw from key research and theories about the human brain, these models represent two distinct concepts about the nature of intelligence.

Symbolic AI is primarily focused on infusing the AI system with both meaning and logic.5 When applied to a language system, one might imagine a large database of words and their definitions (meaning) paired with the rules of grammar and syntax (logic) for how those words come together to form coherent ideas. That’s how humans think, right? We know words and their meaning, and we learn the logic of grammar to correctly put those words in order.

And while symbolic AI showed initial success in narrow domains, it always came up short when reaching beyond its knowledge base. Language-based symbolic systems were limited by the same referential aspect of language that Turing ran into in his Thinking Machines paper: the only tool we have for giving meaning to words is to use more words. Symbolic AI was left with a chicken and egg problem.

Alternatively, modern AI large language models like Claude and ChatGPT leverage the connectionist AI theory as they build a neural network–like structure of language by extracting statistical patterns from massive amounts of written text. There is no externally defined meaning or logic in the baseline model; each symbol (or token) within the system of a large language model (LLM) is weighed in relationship with every other.

Peeking into the parameters of an LLM we see only the statistical patterns extracted from the training data. These models de-emphasize the focus of each individual symbol; meaning is distributed across the weighted interactions of the tokens within the system.

The recent AI boom clearly demonstrates the superior value of connectionist AI over symbolic AI. Rather than treating the referential aspects of language as a problem to be solved, LLMs embrace it as the foundation for the system. The success of these systems offers a fresh perspective on the historical views of the nature of language and its relationship to human thought.

These two fundamental approaches to AI correspond neatly with historical philosophies of language, categorized by Charles Taylor in his book The Language Animal. Symbolic AI matches philosophically with the work of René Descartes, Thomas Hobbes, and John Locke, described by Taylor as an “atomistic” understanding of language. These theories suggest that words map to an external world of thought and ideas which exist independently of language. According to this approach, “language is a collection of independently introduced words,”6 mere carriers of an external meaning or reality.

Connectionist AI, on the other hand, finds its place in the alternative theories of language discussed by Taylor, which he calls “constitutive expressive.” Drawing from the ideas of thinkers like Martin Heidegger and Wilhelm von Humboldt, the constitutive approach emphasizes the referential aspects of language, offering “a picture of language as making possible new purposes, new levels of behavior, new meanings.”7 Humboldt keys in on the notion that objects within a system—what he calls “particulate bits of information”—find their meaning in their relationship to all the others. Humboldt’s analogy of language as a web could have been written about today’s LLMs:

Language can be compared to an immense web, in which every part stands in a more or less clearly recognizable connection with the others, and all within the whole.8

Taylor goes on to explain the constitutive models of language as “retrieving the background,” channeling the gestalt theory of figure & ground interplay of visual perception, described by media theorist Marshall McLuhan:

All situations comprise an area of attention (figure) and a very much larger area of inattention (ground). The two continually coerce and play with each other across a common outline or boundary or interval that serves to define both simultaneously…Ground provides the structure or style of awareness, the ‘way of seeing’...”9

Atomistic models of language over-emphasize individual words as the locus of meaning within the system, ignoring the referential or constitutive role that the relationships of words play in encoding, communicating, and even constructing meaning. And just as the constitutive models of language would suggest, our very conceptualization of language (atomistic vs. constitutive) can be understood as a byproduct of the technological environment in which they are formed; the environment of language acts as the subliminal ground to which the figure of our conscious understanding of language emerges.

Time, Space, and Self

Marshall McLuhan explores this figure/ground relationship of language throughout his book Laws of Media, demonstrating how the invention of the alphabet in effect abstracted our visual symbols for words (letters) from their semantic meaning. Contrasted with the structure of eastern languages and pre-literate, oral cultures, the alphabet is built from the phoneme, which is “the smallest ‘sound unit’ of speech, and it has no relation to concepts or semantic meanings.”10 While this new technology enabled faster movement of information, it subtly shifted our sensory balance, pushing language to favor the visual over the auditory realm of perception.

Different “sensory ratios” of our experience with language have wide-spread implications for how we perceive the world. For example, the printing press stabilized the notion of authorship by enabling the consistent reproduction of texts, effectively “extending the dimensions of the private author in space and time”11 — an intensification of the visual bias already introduced by alphabetic writing. By contrast, manuscript culture understood the production of a book as “a collective, scribal affair,”12 in which texts remained fluid and attribution less fixed.

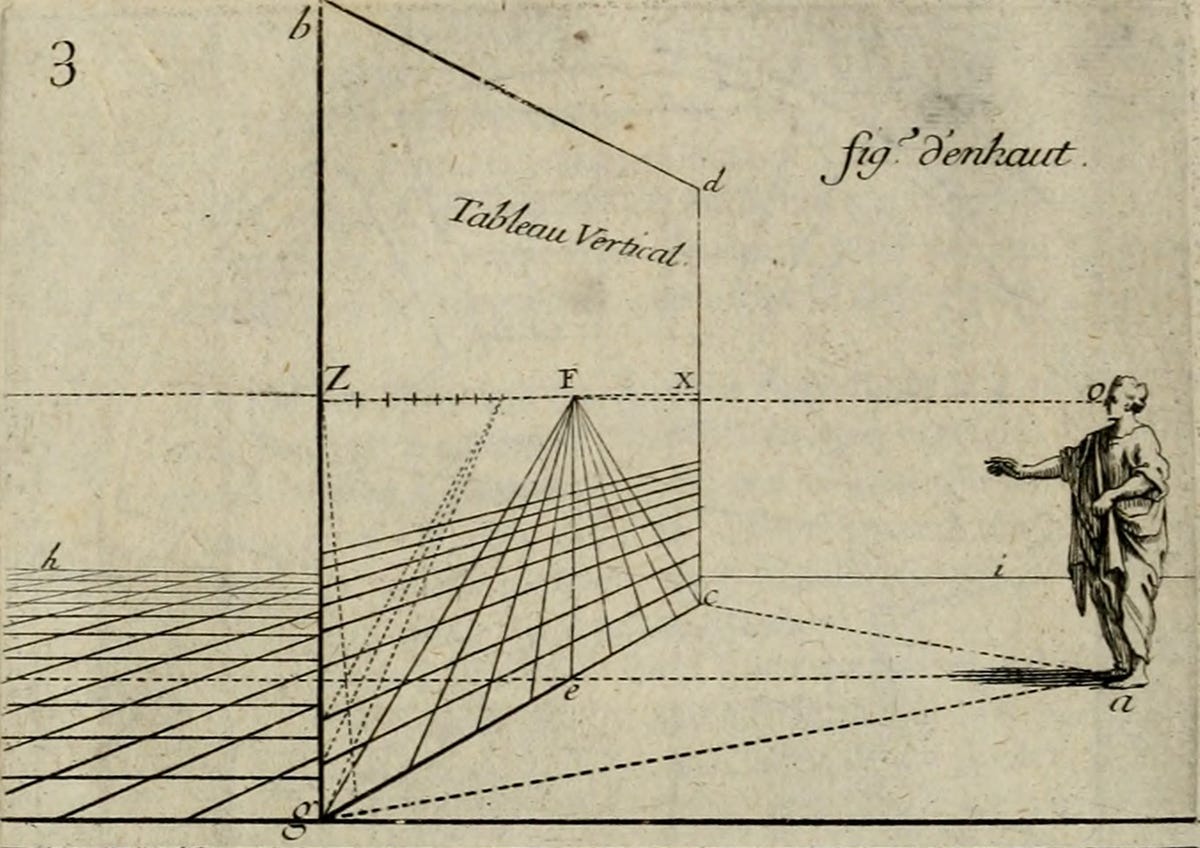

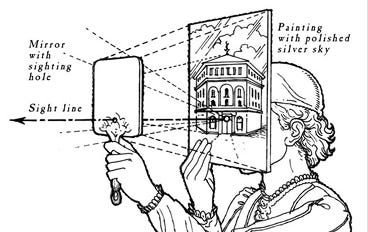

A similar logic of uniformity and repeatability appears in the visual arts of the Renaissance. The development of linear perspective organized around a singular, fixed point of view reflected a growing emphasis on visual coherence and spatial projection, allowing three-dimensional space to be rendered onto a flat plane. While medieval artists painted for semantic fidelity and connectedness of meaning, Renaissance artists increasingly emphasized optical accuracy and visual consistency. This shift coincided with the emergence of the practice of artists signing their work, reflecting a broader cultural movement toward individual attribution and stable identity.

According to McLuhan, visual technologies favor detachment, perspective, and control; this contrasts with auditory technologies which favor immersion, relation, and participation.

Even the modern “common sense” perceptions of time, space, and matter are heavily influenced by the sensibilities of these visually structured technologies. Time and space were recast according to visual characteristics—linear, sequential, and abstractly infinite—each treated as independent from the other. The visual quality of detachment introduced the perspective of the passive observer, which served as the foundation for the scientific revolution. Enlightenment thinkers such as Descartes and Locke came to understand the natural world in largely mechanistic terms: blind matter in motion, composed of minuscule, discrete units that function as the building blocks of a machine-like universe—a structure that also mirrors their atomistic understanding of language.

But recent discoveries in physics and cosmology are beginning to erode these frameworks which have shaped our modern grasp of reality. Einstein’s theory of relativity suggests that time and space are deeply intertwined (not independently infinite variables), entirely man-made constructs for understanding reality. He is often attributed as saying “Time and space are modes by which we think, and not conditions in which we live.”13 Advancements in quantum physics have disrupted classical views of matter. The fundamental stuff of our universe is less like solid material and more resembles relational patterns. Matter is increasingly conceptualized in more acoustic (rather than visual) characteristics: as a field of interdependent variables. Theoretical physicist John Archibald Wheeler describes these recent developments in the physical sciences as informational in nature: relational, semantic, and participatory…what he calls it from bit.

…every it — every particle, every field of force, even the spacetime continuum itself — derives its function, its meaning, its very existence entirely — even if in some contexts indirectly — from the apparatus-elicited answers to yes or no questions, binary choices, bits.14

These scientific discoveries reorient our understanding of the physical sciences from a visual paradigm of isolated objects to an acoustic paradigm of simultaneous, relational interdependence, rebalancing our perception of the natural world.

In addition to providing the conceptual foundation for various scientific revolutions, the sensorial ratio brought with the alphabet also laid the groundwork for modern psychology and the individualistic conceptions of the self. Charles Taylor describes the modern understanding of self as “expressive individualism,” an idea in which “each of us has his/her own way of realizing our humanity, and that it is important to find and live out one’s own, as against surrendering to conformity with a model imposed on us from outside.”15

In other times and cultures throughout history, humans have found their identity and purpose in and through participation with their communities, relationships, and the natural world. In contrast, the modern notion of selfhood—heavily shaped by the social theories of Enlightenment, atomistic thinkers—offers a different portrait: the self as something that must throw off the oppressive connections which used to define in order to discover a truer identity from within.

As we follow the trend of atomistic perceptions of our world being the subliminal result of technological advancements, we can see even the expressive individualism of the modern notion of self as influenced by our linguistic environment.

AI and The Moviegoer

In his novel The Moviegoer, Walker Percy explores this atomized predicament of modern man through the character of Binx, a young stockbroker who aimlessly wanders through life, finding the “everydayness” of his existence void of meaning. Binx’s refrain throughout the novel, “it is impossible to say,” positions this loss of meaning as a linguistic crisis.

The moviegoer sees the world through the lens of the movies he watches, assuming the roles of the characters which offer him an identity in a place, even if it’s mediated through film. These movies provide Binx with uncomplicated relationships along with an identity that is fixed in time, highly curated, and often a product of the advertising-obsessed culture of capitalism.

In McLuhan’s terms, we can understand the medium of film as an extension of the eyes, transporting Binx to other times and places. But on a deeper level, movies serve as an extension of our mind, identity, and consciousness. Binx doesn’t leave the characters behind when he exits the theater; he wears them like clothes, projecting these curated identities in his everyday interactions with family and co-workers as they recursively shape how he views himself, contributing to the loss of self which he suffers from.

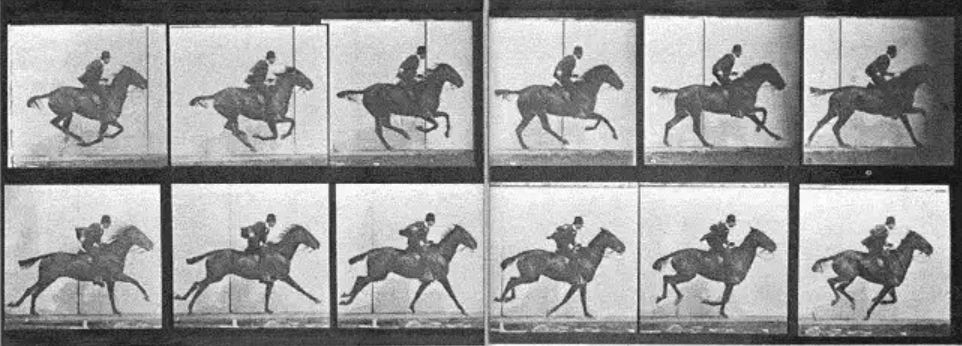

McLuhan points out that, while the movie gives the illusion of time and place — “perspective on a flat surface”—it is in fact the result of the speeding up of a segmented medium: the photograph. Our minds trick us into seeing thousands of static images as “organic form and movement.”16

Applying this to the question of AI consciousness, the medium of film offers us a better understanding of how to interpret our new AI counterparts. In the last chapter of his book Understanding Media, McLuhan discusses the nature of electric technology and the network structure of information that it creates. Conceptually, McLuhan was anticipating the effects of the transistor in today’s AI systems as they harness electrical currents for the movement and processing of information. McLuhan’s predictions of the effects of electric technology correspond neatly with the neural network structures of LLMs, stating that electric technology “establishes a global network that has much of the character of our central nervous system,” how electricity is “a variable condition that involves the special positions of two or more bodies” (referential in nature), and even how it “extends the instant processing of knowledge by interrelation that has long occurred within our central nervous system,”17 all pointing back to the connectionist over symbolic models of AI.

Transistors play a foundational role in today’s AI systems as they leverage electricity to create an information environment that reflects the structure of the human brain. Through this electric process of what McLuhan calls “instant interrelation of a total field” (realized through the speed and relational structure of these neural networks), computers, he claims, can be “made to simulate the process of consciousness… but a conscious computer would still be one that was an extension of our consciousness,”18 just as the movie extended Binx’s self-understanding or self-awareness.

In the same way that Binx was seduced by an extension of himself that gave the illusion of organic visual unity, identity, and purpose, AI has the illusory power to convince us that it is an independently conscious entity. But AI language models, precisely because of their linguistic characteristics, provide us with an opportunity to rediscover a sense of meaning and find our place in the cosmos.

Consciousness, Found

While tracing the history of consciousness as a result of the evolution of language, philosopher Owen Barfield states that modern man “assumes consciousness as situated at some point in space, which has no special relation to the universe as a whole, and is certainly nowhere near its center.”19 Under the influence of the phonetic alphabet, the atomistic models of language created the conceptual foundation for atomistic views of nature and of self, stripping away any sense of meaning and participation inherent in reality. This is why, at a moment of incredible knowledge about the universe, humanity has found itself, as Walker Percy suggests, Lost in the Cosmos.

Descartes was heavily influenced by the abstract nature of his linguistic tools when he set the nature of being from within oneself, stating “I think, therefore I am.” But this move implicitly grounds ontology in consciousness, and the very notion of consciousness is participatory. The prefix con- means “with” or “together”; we are always conscious of something. In Lost in the Cosmos, Walker Percy defines consciousness as “that act of attention to something … an act which is social in its origin.”20

The referential and networked structure of AI language models, along with their still-mysterious capacity to encode the world, offers a mimesis of a reality that is saturated with meaning, pointing us back to the ancient concept of logos.

McLuhan suggests that all words are metaphors except one, the word “word.” The early Greek word for ‘word’ was logos, which also served as “the formal cause of the Kosmos and all things, responsible for their nature and configuration.”21 The technology of the alphabet implicitly separated the form of language from the function of language, allowing mankind to conceptualize the self as a passive observer of the natural phenomenon. Owen Barfield draws a connection to consciousness, describing this perspective on the natural world as “the disappearance of participation.”22 The passive observer ignores the constitutive relationship between humanity and the natural world, losing sight of the distributed, relational meaning inherent in the universe.

And yet, for pre-literate Greeks, the physical structure or shape of the universe was semantic in nature, imbued with meaning. Instead of being a passive observer of meaningless phenomena, mankind was understood as a “microcosm within a macrocosm.”23 Barfield further describes this unity of form and function of language found in pre-literate cultures:

Compared with us, they felt themselves and the objects around them and the words that expressed those objects, immersed together in something like a clear lake of… ‘meaning’.24

While the modern atomistic understanding of nature and of ourselves has led to a vacuum of meaning, our experience of the world is shaped by the media that organize perception. AI has the potential to shift the equilibrium of our sensorial ratio, closing the gap between our perception of natural phenomena and our meaningful participation with them.

The shape of human consciousness directly maps to the shape of our tools for thought, and AI, as an extension of our own consciousness, can help us regain an understanding of ourselves as beings in a cosmos that is rich with meaning and infused with purpose; meaning that is relationally distributed, participatory, and referential. Similarly, consciousness is less a binary of being, and more a process of becoming; language and consciousness have a figure/ground relationship—language provides the ground for the figure of consciousness—each defining the shape and form of the other.

And while AI language models have captured the ground of language, they have no agency or point of view for true consciousness to emerge. Walker Percy says that “a self must be placed in a world.”25 AI has no singular point of view but rather exists as the average of many points of view. AI language models passively capture the ground of language through their network structure, but they are still formless and void, a chaotic web of connections without direction or agency, waiting to be acted upon by a being with a point of view and intention.

So perhaps Turing’s question—“can machines think?”—begins from the wrong orientation. He was right on one hand to measure a computer’s capacity for thought in relation to human thought. But the imitation game was fraught from the start, falling prey to the Narcissus myth by mistaking the machine as an entity unto itself rather than an extension of the human mind.

The next time you notice the network-shaped cracks reflected in the black mirror of your phone, let them remind you that you are a relational being—and that the cosmos is not empty, but alive with meaning.

Turing, Alan M. 1950. “Computing Machinery and Intelligence.” Mind 59, no. 236 (1950): 433–460.

Ibid., 93.

Charles Taylor, The Language Animal: The Full Shape of the Human Linguistic Capacity (Belknap Press of Harvard University Press, 2016), 22.

John McCarthy et al., “A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence,” August 31, 1955, https://www-formal.stanford.edu/jmc/history/dartmouth/dartmouth.html.

Goel, Ashok K. “Looking back, looking ahead: Symbolic versus connectionist AI.” AI Magazine 42:83-85.

Taylor, The Language Animal, 16.

Ibid., 4.

Wilhelm von Humboldt, On Language: The Diversity of Human Language-Structure and Its Influence on the Mental Development of Mankind, trans. Peter Heath (Cambridge University Press, 1988), 69.

Marshall McLuhan and Eric McLuhan, Laws of Media: The New Science (University of Toronto Press, 1988), 5.

Ibid., 14.

Marshall McLuhan, The Gutenberg Galaxy: The Making of Typographic Man (University of Toronto Press,1962), 131.

Ibid., 132.

Albert Einstein, quoted by Aylesa Forsee, Albert Einstein: Theoretical Physicist (Macmillan, 1963), 81.

John Archibald Wheeler, "Information, Physics, Quantum: The Search for Links," in Proceedings of the Third International Symposium on Foundations of Quantum Mechanics, Tokyo, 1989, 354–358.

Charles Taylor, A Secular Age (Belknap Press of Harvard University Press, 2007), 475.

Marshall McLuhan, Understanding Media: The Extensions of Man (MIT Press, 1997), 348.

Ibid., 349.

Ibid., 351.

Owen Barfield, Saving the Appearances: A Study in Idolatry, (Harcourt, Brace & World, Inc., 1965), 78.

Walker Percy, Lost in the Cosmos: The Last Self-help Book, (Picador, 1983), 105.

McLuhan and McLuhan, Laws of Media, 37.

Barfield, Saving the Appearances, 78.

Ibid., 152.

Ibid., 95.

Percy, Lost in the Cosmos, 110.

Great piece. Randomly, I've also been revisiting Percy's novel recently (there's got to be some collective consciousness), and it's an important novel; your reading of it here is very helpful.

Really thought-provoking. But I wonder about your optimism that LLMs — by creating “meaning” via more holistic sense of the relationships among tokens— can really alter our sensorial orientations toward less atomism and greater connectedness. I would like to think so, but we don’t *see* the LLM weighing the relationships among its tokens; we *see* an alphabetic result of that output, one that seems to be as atomistic as all the other ones we’ve seen. Or no?

(I would, for the record, love to have the hyper-capitalists pursuit of AGI result in a global realization of the inter-connectedness of all matter and as a side benefit the self-destructive inanity of hyper-capitalism.)